- Research

- Open access

- Published:

Constructive analysis of periodic solutions with interval halving

Boundary Value Problems volume 2013, Article number: 57 (2013)

Abstract

For a constructive analysis of the periodic boundary value problem for systems of non-linear non-autonomous ordinary differential equations, a numerical-analytic approach is developed, which allows one to both study the solvability and construct approximations to the solution. An interval halving technique, by using which one can weaken significantly the conditions required to guarantee the convergence, is introduced. The main assumption on the equation is that the non-linearity is locally Lipschitzian.

An existence theorem based on properties of approximations is proved. A relation to Mawhin’s continuation theorem is indicated.

MSC:34B15.

Introduction

In this paper, we shall develop a numerical-analytic approach to the analysis of periodic solutions of systems of non-autonomous ordinary differential equations using the idea introduced in [1]. The method is numerical-analytic in the sense that its realisation consists of two stages concerning, respectively, an explicit construction of certain equations and their numerical analysis and is close in the spirit to the Lyapunov-Schmidt reductions [2, 3]. However, neither a small parameter nor an implicit function argument is used.

We focus on numerical-analytic schemes based upon successive approximations. In the context of the theory of non-linear oscillations, such types of methods were apparently first developed in [4–8]. We refer the reader to [9–20] for the related bibliography.

For a boundary value problem, the numerical-analytic approach usually replaces the problem by a family of initial value problems for a suitably perturbed system containing a vector parameter which most often has the meaning of the initial value of the solution. The solution of the Cauchy problem for the perturbed system is sought for in an analytic form by successive approximations, whereas the numerical value of the parameter is determined later from the so-called determining equations.

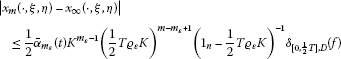

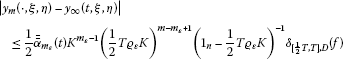

In order to guarantee the convergence, a kind of the Lipschitz condition is usually assumed [9–12] and a smallness restriction of the type

is imposed, where K is the Lipschitz matrix and depends on the period T. The improvement of condition (0.1) consists in maximising the value of the constant .

In this paper, which is a continuation of [1], we provide a constructive approach to the study of solvability of the periodic problem (1.3), (1.4), where the analysis of convergence uses the interval halving technique. We shall see that, under fairly general assumptions, this idea allows one to replace (0.1) by the weaker condition

and, thus, significantly improve the convergence conditions established, in particular, in [6–9, 12]. The restriction imposed on the width of the domain is likewise improved. Finally, an existence theorem based upon the properties of approximate solutions is proved. The proofs use a number of technical facts from [1], which are stated in the course of exposition when appropriate.

1 Problem setting and basic assumptions

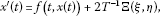

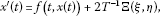

The method that we are interested in deals with T-periodic solutions of a system of non-linear ordinary differential equations

where is a continuous function such that

for all and . Here, T is a given positive number. We restrict ourselves to considering continuously differentiable solutions of system (1.1) and, furthermore, instead of T-periodic solutions of (1.1), we shall always deal with the solutions of the corresponding periodic boundary value problem on the bounded interval ,

The passage to problem (1.3), (1.4) is justified by assumption (1.2).

Our main assumption is that is Lipschitzian with respect to the space variable in a certain bounded set D, which is the closure of a bounded and connected domain in . For the sake of simplicity, we assume that there exists a non-negative constant square matrix K of dimension n such that

for all and .

Here and below, the obvious notation is used, and the inequalities between vectors are understood componentwise. The same convention is adopted implicitly for the operations ‘max’ and ‘min’ so that, e.g., for any , where , , is defined as the column vector with the components , .

2 Notation and symbols

We fix an and a bounded set . The following symbols are used in the sequel:

-

1.

is the unit matrix of dimension n.

-

2.

is the maximal, in modulus, eigenvalue of a matrix K.

-

3.

Given a closed interval , we define the vector by setting

(2.1) -

4.

, : see (10.5).

-

5.

∂ Ω is the boundary of a domain Ω.

-

6.

: see Definition 10.1.

The notion of a set associated with D, which could have been called an inner r-neighbourhood of D, will often be used in what follows.

Definition 2.1 For any non-negative vector , we put

where

One of the assumptions to be used below means that the inner r-neighbourhood of D is non-empty for r sufficiently large.

Finally, let the positive number be determined by the equality

We refer, e.g., to [12, 21] for the discussion of other ways of introducing the constant and for its meaning. What is important for us here is that is the constant appearing in Lemma 3.2. One can show by computation that

3 p-periodic successive approximations

The method suggested by Samoilenko in [6, 7], originally called numerical-analytic method for the investigation of periodic solutions, was also referred to later as the method of periodic successive approximations [9–12]. Its scheme, which is described in a suitable for us form by Propositions 3.1 and 3.4 below, is quite simple and deals with the investigation of the parametrised equation

where is a parameter to be chosen later. For convenience of reference, we formulate the statements for the p-periodic problem

where and is arbitrary but fixed.

Following [1], we now describe the original, unmodified, periodic successive approximations scheme for the p-periodic problem (3.2), (3.3) which we are going to modify and which is constructed as follows. With problem (3.2), (3.3), one associates the sequence of functions , , defined according to the rule

for and , where the vector is regarded as a parameter, the value of which is to be determined later.

Proposition 3.1 ([[12], Theorem 3.17])

Let the function f satisfy the Lipschitz condition (1.5) with a matrix K for which the inequality

holds and, moreover,

Then, for any fixed , the following assertions are true:

-

1.

Sequence (3.4) converges to a limit function

(3.7)

uniformly in .

-

2.

The limit function (3.7) satisfies the p-periodic boundary conditions

-

3.

The function is the unique solution of the Cauchy problem

(3.8)

(3.8)

where

-

4.

Given an arbitrarily small positive ε, one can choose a number such that the estimate

holds for all and , where

Recall that, according to (2.2), condition (3.6) means the non-emptiness of the inner -neighbourhood of the set D, where is the vector given by formula (2.1). This agrees with the natural supposition that, for an approximation technique to be applicable, the domain where the Lipschitz condition is assumed should be wide enough.

The proof of Proposition 3.1 is based on Lemma 3.2 formulated below, which provides an estimate for the sequence of functions , , given by the formula

where and , . We provide the formulation here for a clearer understanding of the constants appearing in the estimates.

Lemma 3.2 ([[16], Lemma 3])

For any , one can specify an integer such that

for all and .

It should be noted that estimate (3.13) is optimal in the sense that ε can never be put equal to zero.

Remark 3.3 It follows from [[22], Lemma 4] that if , where

then in Lemma 3.2 (here, of course, we think of as of the least integer possessing the property indicated).

The assertion of Proposition 3.1 suggests a natural way to establish a relation between the p-periodic solutions of the given equation (1.3) and those of the perturbed equation (3.8) (or, equivalently, solutions of the initial value problem (3.8), (3.9)). Indeed, it turns out that, by choosing the value of z appropriately, one can use function (3.7) to construct a solution of the original periodic boundary value problem (1.3), (1.4).

Let the assumptions of Proposition 3.1 hold. Then:

-

1.

Given a , the function is a solution of the p-periodic boundary value problem (3.2), (3.3) if and only if z is a root of the equation

(3.15) -

2.

For any solution of problem (3.2), (3.3) with , there exists a such that .

The important assertion (2) means that equation (3.15), usually referred to as a determining equation, allows one to track all the solutions of the periodic boundary value problem (1.3), (1.4). In such a manner, the original infinite-dimensional problem is reduced to a system of n numerical equations.

The method thus consists of two parts, namely, the analytic part, when the integral equation (3.1) is dealt with by using the method of successive approximations (3.4), and the numerical one, which consists in finding a value of the unknown parameter from equation (3.15). This closely correlates with the idea of the Lyapunov-Schmidt reduction [2, 3].

The main obstacle for an efficient application of Proposition 3.4 is due to the fact that the function , and, therefore, the mapping are explicitly unknown. Nevertheless, it is possible to prove the existence of a solution on the basis of the properties of a certain iteration which is constructed explicitly for a certain fixed m. For this purpose, one studies the approximate determining system

where is defined by the formula

for . This topic is discussed in detail, in particular, in [12], whereas a theorem of the kind specified, which corresponds to the scheme developed here, is proved in Section 9. Our main goal is to obtain a solvability theorem under assumptions weaker than those that would be needed when applying Proposition 3.1.

Indeed, in view of (2.5), assumption (3.5), which is essential for the proof of the uniform convergence of sequence (3.4), can be rewritten in the form

Inequality (3.17) can be treated either as a kind of upper bound for the Lipschitz matrix or as a smallness assumption on the period p, the latter interpretation presenting the scheme as particularly appropriate for the study of high-frequency oscillations.

Without assumption (3.17), Lemma 3.2 does not guarantee the convergence of sequence (3.4) when applied directly along the lines of the proof of Proposition 3.1. Nevertheless, it turns out that this limitation can be overcome and, by using a suitable parametrisation and modifying the scheme appropriately, one can always weaken the smallness condition (3.5) so that the constant on its right-hand side is doubled:

Note also that, although we have in mind to weaken mainly the smallness condition (3.17) guaranteeing the convergence of iterations, it turns out that the techniques suggested here for this purpose allow us to obtain a considerable improvement of condition (3.6) as well (Corollary 6.7).

Moreover, we shall see that, under the weaker condition (3.18), the modified scheme can be used to prove the existence of a periodic solution on the basis of results of computation (Theorem 10.2).

4 Interval halving, parametrisation and gluing

We should like to show that the approach described by Proposition 3.1 can also be used in the cases where the smallness condition (3.5), which guarantees the convergence, is violated. For this purpose, a natural trick based on the interval halving can be used, where the unmodified scheme, in a sense, should work twice. However, some care should be taken on the boundary conditions.

Indeed, from the first glance, one is tempted to implement halving in the sense that the original scheme should be applied for each of the resulting half-intervals, and thus sequence (3.4) would be constructed twice for problem (3.2), (3.3) with , , and , , , respectively. This is impossible, however, because the boundary conditions on the half-intervals, with trivial exceptions, are never -periodic.

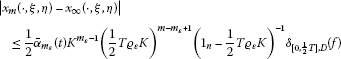

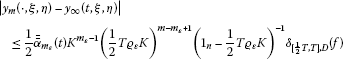

The correct halving scheme is obtained when, along with the periodic boundary value problem (1.3), (1.4), we consider two auxiliary problems

and

where is a free parameter, the value of which is to be determined suitably from the argument related to gluing. The mutual disposition of the graphs of x and y satisfying, respectively, problems (4.1), (4.2) and (4.3), (4.4) is as shown on Figure 1.

Our further reasoning related to problem (1.3), (1.4) uses the following simple observation. Let us put

Proposition 4.1 ([1])

Let and be solutions of problems (4.1), (4.2) and (4.3), (4.4), respectively, with a certain value of . Then the function

is a solution of the periodic problem boundary value problem (1.4) for the equation

Conversely, if a certain function is a solution of problem (1.3), (1.4), then its restrictions and to the corresponding intervals satisfy, respectively, problems (4.1), (4.2) and (4.3), (4.4).

Remark 4.2 A solution of the functional differential equation (4.7) is understood in the Carathéodory sense, and a jump of at is allowed. Note that function (4.6) is always continuous at .

The idea of Proposition 4.1 is, in fact, to rewrite the periodic boundary condition (1.4) in the form

which naturally leads us to the introduction of the parameter λ.

Proposition 4.1 allows one to treat the T-periodic problem (1.3), (1.4) as a kind of join of two independent two-point problems (4.1), (4.2) and (4.3), (4.4). Solving them independently and considering λ as an unknown parameter, one can then try to ‘glue’ their solutions together by choosing the value of λ so that (4.9) holds. The possibility of this gluing is equivalent to the solvability of the original problem. A rigorous formulation is contained in the following

Proposition 4.3 ([1])

Assume that and are solutions of problems (4.1), (4.2) and (4.3), (4.4), respectively, for a certain value of . Then the function given by formula (4.6) is a solution of problem (1.3), (1.4) if and only if the equality

holds.

Conversely, if a certain is a solution of problem (1.3), (1.4), then the functions and satisfy, respectively, problems (4.1), (4.2) and (4.3), (4.4).

Introduce the functions and , , by putting , ,

for , and

for . In particular, we have

and

Functions (4.10) and (4.11), which are, in fact, appropriately scaled versions of (3.12), are involved in the estimates given in the sequel.

5 Iterations on half-intervals

As Proposition 4.3 suggests, our approach to the T-periodic problem (1.3), (1.4) requires that we first study the auxiliary problems (4.1), (4.2) and (4.3), (4.4) separately, for which purpose appropriate iteration processes will be introduced. Let us start by considering problem (4.1), (4.2). Following [1], we set

and define the recurrence sequence of functions , , by putting

for all , and . In a similar manner, for the parametrised problem (4.3), (4.4) on the interval , we introduce the sequence of functions , , according to the formulae

for all η and λ from .

The recurrence sequences determined by equalities (5.1), (5.2) and (5.3), (5.4) arise in a natural way when boundary value problems of type (4.1), (4.1) and (4.3), (4.4) are considered. It is not difficult to verify that formulae (5.1), (5.2) and (5.3), (5.4) are particular cases of those corresponding the iteration scheme for two-point boundary value problems (see, e.g., [23]). One can also derive these formulae directly from Proposition 3.1 by carrying out, respectively, the substitutions , , and , , after which one arrives at parametrised -periodic boundary value problems on the corresponding half-intervals.

It is important to note that all the members of the sequences , , and , , satisfy, respectively, conditions (4.2) and (4.4).

Lemma 5.1 For any and , the functions and satisfy the boundary conditions

Now recall that the vector λ, which is involved in all the above-stated relations, is the ‘gluing’ parameter determining the pair of auxiliary boundary value problems (4.1), (4.2) and (4.3), (4.4), for which a continuous join described by Proposition 4.3 is possible. In this relation, the following property is important.

Lemma 5.2 Let be arbitrary. Then the equality

holds if and only if

Proof Indeed, it follows directly from (5.1) and (5.3) that and , whence the assertion is obvious for . Similarly, if , then, according to (5.2) and (5.4), we have and and, consequently, relation (5.7) is equivalent to (5.8) for any m. □

6 Successive approximations and their convergence

Let us now pass to the construction of the iteration scheme for the original T-periodic problem (1.3), (1.4). The sequences and , , from the preceding section will be used for this purpose. We shall see that, for this purpose, the graphs of the respective members of the last named sequences should be glued together in the sense of Lemma 5.2. Namely, we put

for any . Functions (6.1) and (6.2) will be considered only for those values of ξ and η that are located, in a sense, sufficiently far from the boundary of the domain D. More precisely, we consider from the set , which, for any non-negative vector r, is defined by the equality

Recall that we use notation (2.3). In other words, a couple of vectors belongs to if and only if every convex combination of ξ and η lies in D together with its r-neighbourhood. The inclusion implies, in particular, that and , i.e., the vectors ξ and η both belong to the set defined by formula (2.2). It is also obvious from (6.3) that for any r.

The following statement shows that sequence (6.1) is uniformly convergent and its limit is a solution of a certain perturbed problem for all which are admissible in the sense that with r sufficiently large.

Theorem 6.1 Let the vector-function satisfy the Lipschitz condition (1.5) on the set D with a matrix K such that

Moreover, assume that

Then, for an arbitrary pair of vectors :

-

1.

The uniform, in , limit

(6.6)

exists and, moreover,

-

2.

The function is the unique solution of the Cauchy problem

(6.8)

(6.8)

where

-

3.

Given an arbitrarily small positive ε, one can specify a number such that

(6.11)

(6.11)

for all and , where is given by (3.11).

Recall that the constant involved in condition (6.4) is given by equality (2.4), while the vector arising in (6.5) is defined according to (2.1).

Remark 6.2 The error estimate (6.11) may look inconvenient because it is guaranteed starting from a sufficiently large iteration number, , depending on the value of ε which can be arbitrarily small. It is, however, quite transparent when the required constant is not ‘too close’ to (i.e., if ε is not ‘too small’). More precisely, in view of Remark 3.3, for , where

is given by formula (3.14). Consequently, inequality (6.11) with holds for an arbitrary value of .

By analogy with Theorem 6.1, under similar conditions, we can establish the uniform convergence of sequence (6.2). Namely, the following statement holds.

Theorem 6.3 Assume that the vector-function f satisfies conditions (1.5), (6.4) and, moreover,

Then, for all fixed :

-

1.

The uniform, in , limit

(6.13)

exists and, moreover,

-

2.

The function is the unique solution of the Cauchy problem

(6.15)

(6.15)

where

-

3.

For an arbitrarily small positive ε, one can find a number such that

(6.18)

(6.18)

for all and , where is given by (3.11).

Remark 6.4 Similarly to Remark 6.2, one can conclude that the validity of estimate (6.18) is ensured for all provided that with given by formula (3.14).

Theorems 6.1 and 6.3 are improved versions of Theorems 1 and 2 from [1], and their proofs follow the lines of those given therein. The main difference here is the use of Lemma 7.2 in order to guarantee that the values of the iterations do not escape from D. The rest of the argument is pretty similar to that of [1], and we omit it.

Note that the assumptions of Theorems 6.1 and 6.3 differ from each other in conditions (6.5) and (6.12) only. Therefore, by putting

we arrive immediately at the following statement summarising the last two theorems.

Theorem 6.5 Assume that the function f satisfies the Lipschitz condition (1.5) in D with K satisfying relation (6.4) and, moreover, D is such that

Then, for any , the assertions of Theorems 6.1 and 6.3 hold.

Recall that D is the main domain where the Lipschitz condition (1.5) is assumed, whereas is the subset of defined according to (6.3). The latter set is, in a sense, a two-dimensional analogue of and, as has already been noted above, the inclusion

is true. By virtue of (6.21), assumption (6.20) implies in particular that

which is a condition of type (3.6) appearing in Proposition 3.1 (see Figure 2). It turns out that, in the case of a convex domain, condition (6.20) can always be replaced by (6.22). Indeed, the following statement holds.

Lemma 6.6 If the domain D is convex, then the corresponding set has the form

Proof In view of (6.21), it is sufficient to show that

Indeed, let us put (the assertion is, of course, true for any non-negative vector r, but the present formulation is sufficient for our purposes) and assume that, on the contrary, inclusion (6.23) does not hold. Then one can specify some ξ and η such that

According to definition (6.3), relation (6.25) means the existence of certain and such that

Let us put . Then, in view of (6.26), we have

Furthermore, it is obvious that

and, consequently, z is a convex combination of and . By virtue of (2.2), (6.24) and (6.27), both vectors and belong to D and, therefore, so does z because (6.28) holds and the set D is convex. However, this contradicts relation (6.26). Thus, inclusion (6.23) holds, and our lemma is proved. □

By virtue of Lemma 6.6, the assertion of Theorem 6.5 for f Lipschitzian in a convex domain can be reformulated as follows.

Corollary 6.7 Let f satisfy conditions (1.5) and (6.4). If, moreover, the domain D is convex and (6.22) holds, then, for any ξ and η from , all the assertions of Theorems 6.1 and 6.3 hold.

The convexity assumption on D is rather natural and, in fact, the domain where the Lipschitz condition for the non-linearity is verified most frequently has the form of a ball (in our case, where the inequalities between vectors are understood componentwise, it is an n-dimensional rectangular parallelepiped).

We note that the smallness assumption (6.4), which guarantees the convergence of iterations in Corollary 6.7, is twice as weak as the corresponding condition (3.5) of Proposition 3.1:

Furthermore, it is rather interesting to observe that the condition on inner neighbourhoods also becomes less restrictive after the interval halving has been carried out. Indeed, it is clear from (2.1) and (6.19) that, for condition (6.22) of Corollary 6.7 to be satisfied, it would be sufficient if

whereas, at the same time, assumption (3.6) of Proposition 3.1 would require the relation

The radius of the inner neighbourhood in (6.30) is less by half. Comparing (6.4) and (6.30) with the corresponding conditions (6.29) and (6.31) arising in Proposition 3.1, we conclude that the idea of interval halving described above thus allows us to improve the original scheme of periodic successive approximations in both directions.

Theorem 6.5 suggests that the iteration sequences (5.2) and (5.4) can be used to construct the solutions of auxiliary problems (4.1), (4.2) and (4.3), (4.4) and ultimately of the original problem (1.3), (1.4). A further analysis, which will lead us to an existence theorem, involves determining equations. Before continuing, we give some auxiliary statements.

7 Auxiliary statements

Several technical lemmata given below are needed in the proof of Theorems 6.1 and 6.3. We implicitly assume in the formulations that condition (6.20) is satisfied.

Given arbitrary and , put

for all . The linear mapping , which obviously transforms the space to itself, is in fact a scaled version of the corresponding projection operator used rather frequently in studies of the periodic boundary problem (see, e.g., [12]). In our case, properties of this mapping are used when estimating the values of the Nemytskii operator generated by the function f involved in equation (1.3).

Lemma 7.1 Let and be arbitrary functions such that and . Then:

-

1.

For ,

(7.2) -

2.

For ,

(7.3)

Recall that and are functions (4.12), (4.13), and the vectors , are defined according to (2.1). The proof of Lemma 7.1 is almost a literal repetition of that of [[1], Lemma 7] and uses the estimate obtained in [[22], Lemma 3].

Lemma 7.2 For arbitrary and , the inclusions

and

hold.

Proof

Let us fix an arbitrary pair of vectors

and prove, e.g., relation (7.4). We shall argue by induction. Indeed, in view of (5.1),

for . This means that, at every point t from , the value of is a convex combination of ξ and η. Recalling definition (6.3) of the set and using assumption (7.6), we conclude that all the values of the function lie in D, i.e., (7.4) holds with .

Assume now that

for a certain value of m and show that the inclusion

holds as well. Indeed, considering (5.2) and recalling notation (7.1), we conclude that, for all m, the identity

holds for any . Since the validity of inclusion (7.4) has been assumed, we see that inequality (7.2) of Lemma 7.1 can be applied and, therefore, identity (7.10) yields

for all . It follows from (7.11) that, at every point , the value lies in the -neighbourhood of a convex combination of the vectors ξ and η. Since ξ and η satisfy (7.6) and, by (6.19), , it follows from definition (6.3) of the set that all the values of the function belong to D, i.e., (7.9) holds. Thus, inclusion (7.4) is true for all . Recalling notation (6.1), we arrive immediately to (7.4).

Relation (7.5) is proved by analogy. Indeed, it follows from (5.3) that

where for any . Since, obviously, for all , identity (7.12) and assumption (7.6) guarantee that the function has values in D. Let us assume that, for a certain m,

and show that

By virtue of (5.4), for any , we have

with the same definition of as in (7.12). According to assumption (7.13), the function has values in D. Therefore, using equality (7.15) and estimate (7.3) of Lemma 7.1, we obtain

for all . Since , inequality (7.16) implies that all the values of the function belong to the -neighbourhood of a convex combination of ξ and η. Recalling now (6.3) and (6.19) and using assumption (7.6), we arrive at (7.14). Consequently, inclusion (7.13) holds for all m, and (7.5) follows immediately from (6.2) and (7.13). The lemma is proved. □

Finally, the corresponding assertions of Theorems 6.1 and 6.3 lead us immediately to the following statement.

Lemma 7.3 Under the assumptions of Theorem 6.5, the inclusions

and

hold true for any .

The proof of Lemma 7.3 consists in passing to the limit in (7.4) and (7.5) as , the possibility of which is ensured by Theorem 6.5.

8 Limit functions and determining equations

The techniques based on the original periodic successive approximations (3.4), the applicability of which is guaranteed by Proposition 3.1, lead one to the necessary and sufficient conditions for the solvability formulated in terms of determining equations (3.15) of Proposition 3.4. A certain analogue of the last mentioned statement should also be established for our new version of the method, with iterations constructed using the interval halving procedure, for the resulting scheme to be logically complete. It is natural to expect that the limit functions of the iterations on the half-intervals will help one to formulate criteria of solvability of the original problem, and, in fact, it turns out that it is the functions and defined according to equalities (6.10) and (6.17) that provide such a characterisation.

Indeed, Theorems 6.1 and 6.3 guarantee that, under the conditions assumed, the functions and are well defined for all . Therefore, by putting

we obtain a function , which is well defined for the same values of . This function is obviously continuous.

The following theorem, which is a modified version of [[1], Theorem 4], establishes a relation of this function to the original periodic problem (1.3), (1.4) in terms of the zeroes of Ξ and H.

Theorem 8.1 Let f satisfy the Lipschitz condition (1.5) with a matrix K such that (6.4) holds. Furthermore, assume that D has property (6.20). Then:

-

1.

The function defined by (8.1) is a solution of the periodic boundary value problem (1.3), (1.4) if and only if the pair satisfies the system of 2n equations

(8.2) -

2.

For every solution of problem (1.3), (1.4) with , there exists a pair such that .

Equations (8.2) are usually referred to as determining or bifurcation equations [3, 12] because their roots determine solutions of the original problem. The variables involved in system (8.2) admit a natural interpretation: ξ means the value of the solution at 0, whereas η is responsible for its value at . We can observe the main difference between the unmodified periodic successive approximations (Proposition 3.1) and a similar scheme obtained after the interval halving (Theorem 6.5): the convergence condition is twice as weak but, instead of n numerical equations (3.15) of Proposition 3.4, we need to solve 2n equations (8.2) of Theorem 8.1.

A constructive solvability analysis involves a natural concept of approximate determining equations, which is discussed below.

9 Approximate determining equations

Although Theorem 8.1 provides a theoretical answer to the question on the construction of a solution of the periodic problem (1.3), (1.4), its application faces difficulties due to the fact that the explicit form of the functions and appearing in (8.2) is usually unknown. This complication can be overcome by using the functions

and

for a fixed m, which will lead one to the so-called approximate determining equations. More precisely, similarly to [12, 24], it can be shown that, under certain natural assumptions, one can replace the exact determining system (8.2) by its approximate analogue

Note that, unlike system (8.2), the m th approximate determining system (9.3) contains only terms involving the functions and and, thus, known explicitly.

It is natural to expect that approximations to the unknown solution of (1.3), (1.4) can be obtained by using the function ,

which is an ‘approximate’ version of (8.1) well defined for all and .

The piecewise character of the definition of function (9.4) does not affect the properties that a potential approximation obtained from it should possess. Indeed,

Proposition 9.1 If ξ and η satisfy equations (9.3) for a certain m, then the function determined by equality (9.4) is continuously differentiable on .

Proof It follows immediately from (5.2), (5.4) and (9.4) that

and

Recall that, by virtue of (5.4) and (6.2),

Then, in view of (9.1) and (9.2), it follows from (9.3), (9.5) and (9.6) that

and, therefore, is continuous at . The continuous differentiability of the function at other points is obvious from its definition. □

In order to prove a statement on the solvability of problem (1.3), (1.4), we need some estimates of the functions and , , defined by (9.1) and (9.2).

Lemma 9.2 Assume that (6.20) holds. Let f satisfy the Lipschitz condition (1.5) with a matrix K such that

Then the estimates

and

hold for any values of and .

Proof Let us fix arbitrary and . Recalling (6.10) and (9.1), we obtain

By Lemma 7.3, the function has values in D and, therefore, the Lipschitz condition (1.5) can be used in (9.10). Then, applying estimate (6.11) of Theorem 6.1 with , where is given by (3.14), we obtain

Recall now that, in view of Remark 6.2 and relations (3.11) and (3.14), one has

and, therefore, (9.11) can be rewritten in the form

Furthermore, it follows from (4.12) and (4.10) that the function has the form

whence we obtain by computation that

Considering (9.12) and (9.15), we find that inequality (9.11), in fact, means that

which estimate coincides with (9.8). Note that the invertibility of the matrix is guaranteed by condition (9.7).

In a similar manner, in order to establish (9.9), we use (6.17) and (9.1) to obtain the estimate

Lemma 7.3 guarantees that all the values of the function lie in D and, therefore, the Lipschitz condition (1.5) can be used in (9.17). Estimate (6.18) of Theorem 6.1 applied with then yields

Finally, it follows from (4.13) and (4.11) by computation that

and, hence,

Consequently, by virtue of relations (9.12) and (9.20), inequality (9.18) leads us directly to the required estimate (9.9). □

10 Solvability analysis based on approximation

The argument shown above allows us to conclude on the solvability of the periodic problem (1.3), (1.4) on the basis of properties of iterations (5.2) and (5.4). More precisely, it turns out that the use of functions (9.1) and (9.2) allows one to study the vector field ,

the critical points of which, as we have seen in Theorem 8.1, determine the solutions of the original problem (1.3), (1.4), through its approximation

where m is fixed. In the formulation of the theorem given below, the following notion is used.

Definition 10.1 ([12])

Let r and l be positive integers and be an arbitrary non-empty set. For any pair of vector functions , , we write

if and only if there exists a function such that the strict inequality

holds for all .

Here, , , are the unit vectors,

and stands for the usual inner product in . The binary relation introduced by Definition 10.1 is a kind of strict inequality for vector functions and its properties are similar to those of the usual strict inequality sign. For example, and imply that . The last named property will be used below in the proof of Theorem 10.2.

We are now able to formulate a statement guaranteeing the solvability of the original periodic problem (1.3), (1.4) based on the information obtained in the course of computation of iterations. In contrast to the unmodified scheme of periodic successive approximations (Proposition 3.1, ), here the iterations are proved to be convergent under the assumption that is twice as weak as in the former case (Theorem 6.5, ). A similar observation can be made concerning the assumption on the domain D (see Corollary 6.7 and the remarks related to conditions (6.30) and (6.31)).

When stating the existence theorem, we restrict our consideration to a slightly weaker version of condition (6.4), where the value is replaced by 0.3, and thus neglect the gap () for ε in estimates (6.11) and (6.18).

Theorem 10.2 Assume that the function f in (1.3) satisfies the Lipschitz condition (1.5) with a matrix K such that inequality (9.7) holds and, moreover, the set D has property (6.20). Moreover, let there exist a closed domain

such that, for a certain fixed value of , the mapping given by formula (10.2) satisfies the conditions

and

where

Then there exist certain values such that the function is a solution of the periodic boundary value problem (1.3), (1.4).

Recall that the symbol in (10.7) is understood in the sense of Definition 10.1. It should be noted that condition (10.7) involves the values of functions on the boundary of Ω only.

Proof We shall use the lemmata stated above. By analogy to [12, 24], we shall prove that the fields Φ and are homotopic. It will be sufficient to consider the linear deformation

where . Indeed, it is clear that is a continuous mapping on ∂ Ω for every and, furthermore,

Let us fix an arbitrary pair . According to (10.1) and (10.2), we have

On the other hand, by Lemma 9.2, estimates (9.8) and (9.9) true. Using relations (9.8) and (9.9) in (10.11), we show that

and hence does not vanish on ∂ Ω for any θ. Thus, Φ is homotopic to . The property of invariance of degree under homotopy then yields

and therefore, in view of (10.6), we conclude that . Consequently, there exist vectors and possessing the properties indicated, and it only remains to refer to Theorem 8.1. The theorem is proved. □

Note that Theorem 10.2 provides solvability conditions based upon properties of approximations starting from the second one inclusively. A similar statement allowing to use the zeroth and the first approximations can be obtained if we use [[12], Lemma 3.16] instead of Lemma 3.2. In that case, condition (10.7) of Theorem 10.2 is replaced, respectively, by the relations

and

11 Approximation of a solution

The theorem proved in the preceding section can be complemented by the following natural observation. Let be a root of the approximate determining system (9.3) for a certain m. Then the function

defined according to (9.4) can be regarded as the m th approximation to a solution of the periodic problem (1.3), (1.4). This is justified by Proposition 9.1 and the estimates

for and

for , which, as is easy to see from (9.4), follow directly from Theorem 6.5. A uniform inequality, not given here, can be obtained by estimating the mapping for any fixed .

It is worth to emphasise the role of the unknown parameters whose values appearing in (11.1) are determined from equations (9.3): is an approximation of the initial value of the periodic solution and is that of its value at .

As regards the practical application of Theorem 10.2, it should be noted that, according to (10.2), the mapping is known in an analytic form because it is determined solely by the m th iteration, which is already constructed at the moment. Of course, the degree in (10.6) is the Brouwer degree because all the vector fields are finite-dimensional. Likewise, all the terms in the right-hand side of inequality (10.7) are computed explicitly (e.g., by using computer algebra systems).

12 An example

Let us consider the scalar π-periodic boundary value problem

where , . It is easy to check that the function

is a solution of problem (12.1), (12.2). This solution has values in the domain , where, as one can verify, the convergence condition (3.5) is not satisfied. However, the corresponding condition with the doubled constant (3.18) does hold, and therefore, the interval halving technique can be used.

The appropriate computations, which have been carried out by using Maple 14 and are omitted here, show that the approach based on Theorems 6.5 and 10.2 is indeed applicable in this case. The existence of solution (12.3) (let us forget for a moment that we know it explicitly in this academic example) is established by Theorem 10.2, whereas its approximations of type (11.1) are constructed as described above. For instance, in the first approximation, we have with

where is the indicator function (4.5) and

and

The numerical values of the parameters ξ and η corresponding to functions (12.4), (12.5) (see Table 1) are found from the system of equations (9.3) with , which, in this case, have the form

and

The graphs obtained in the course of computation are shown on Figures 3 and 4, whereas Table 1 contains the corresponding numerical values of the parameters. Note that only the zeroth approximation has derivative with a discontinuity at (cf. Proposition 9.1). The graphs and the computed numerical values of the parameters show a rather good accuracy of approximation.

13 Comments

Several points can be outlined in relation to the techniques discussed in the preceding sections.

13.1 Approximation scheme in practice

An interesting feature of the approach indicated here is that a practical analysis of the periodic problem (1.3), (1.4) along its lines starts directly with the computation of iterations. We construct the approximate determining equations (9.3), solve them numerically in an appropriate region, substitute the corresponding roots into the formula for and form functions (11.1) which are, in a sense, candidates for approximations of a solution. Having constructed functions (11.1) for several values of m, we check their behaviour heuristically and if it exhibits some signs of being possibly convergent, we stop the computation and verify the assumptions of the existence theorem. If successful, then, since this moment, we already know that a solution exists, and either we are satisfied with the achieved accuracy of approximation (in this case, the scheme stops and the function given by (11.1) for the last computed value of m is proclaimed as its outcome) or, for some reasons, we find that a more accurate approximation is needed (one more step is made then, and a similar check is carried out for the new approximation).

It is important to observe that, once the existence of a solution is known from Theorem 10.2 at the m th step of iteration, we immediately obtain an approximation to it in the form (11.1). The scheme thus allows us to both study the solvability of the periodic problem and construct approximations to its solution.

It should be noted that the ability to derive the fact of solvability of the original problem from the corresponding properties of approximate problems is rather uncommon (see [12] for some details). For the numerical methods, the generic situation is, in fact, quite the reverse, when some or another technique is applied to solve a problem which is a priori assumed to be solvable.

13.2 Extension to other problems

The idea expressed above can easily be adopted for application to differential equations with argument deviations. The only issue that should be clarified in that case is the definition of iterations on the half-intervals at those points which are thrown over the middle to the adjacent half-interval. For this purpose, sequences (5.2) and (5.4) should be computed simultaneously, with (5.4) serving as an initial function for (5.2) at the next step, and vice versa.

Likewise, with appropriate modifications, the technique developed here can be applied to problems with boundary conditions other than periodic ones. We do not dwell on this topic here.

13.3 Variable subinterval lengths

It is, of course, not necessary to keep the ratio of subinterval lengths. For example, if there is a point such that is much greater than , the halving, or any other kind of division, is natural to be continued on . This reminds us of the idea used in the adaptive numerical methods with a variable step length.

13.4 Applicability on small intervals

In contrast to purely numerical approaches, where one may be forced to discretise with a tiny step, the efficiency of the technique based on Theorem 6.5 is not so much affected by the smallness of the interval. This makes the scheme well applicable, in particular, for the study of high-frequency oscillations.

13.5 Advantages over other methods

The proposed technique has some other positive features distinguishing it from other approaches. For example, when applying it, one experiences no difficulties with the selection of the starting approximation (in contrast, e.g., to monotone iterative methods); there is no need to re-calculate considerable amounts of data when passing to the next step of approximation (unlike projection methods); the global Lipschitz condition and the assumption on the unique solvability of the Cauchy problem are not necessary (unlike shooting method); etc. As regards the last mentioned condition, one should note that, for functional differential equations, it is violated even in very simple cases, and it is thus unnatural to require it when constructing a scheme of analysis of a reasonably wide class of problems.

13.6 Repeated interval halving

The interval halving procedure can be repeated. When doing so, we observe that conditions both on the eigenvalues of the Lipschitz matrix and the size of the domain are weakened by half at each step. Indeed, it follows immediately from Corollary 6.7 that the periodic successive approximation scheme constructed with k interval halvings is applicable provided that

and

It is also clear that the , , is a strictly increasing sequence of sets tending to the original domain D in the limit as k grows to ∞. In other words, rather interestingly, the scheme suggested here is theoretically applicable however large the eigenvalues of K may be.

The side-effect of the successive interval halving is the increase of the dimension of the system of determining equations, which contains equations at the k th interval halving. One can regard this as a certain price to be paid for being able to apply interval halving in order to convert a divergent iteration scheme into a convergent one.

In this way, by carrying out interval halving sequentially, one can, in particular, re-establish the convergence of numerical-analytic algorithms for systems of ordinary differential equations with globally Lipschitzian non-linearities (see [12, 25, 26]).

13.7 Combination with other methods

The most difficult part of the scheme discussed consists in the analytic construction of so many members of the parametrised iteration sequence (9.4) which is sufficient to establish the solvability of the periodic problem (see conditions (10.6), (10.7)) and achieve the required precision of approximation in (11.1). Its practical implementation, usually done by using symbolic computation systems, can be considerably facilitated by combining the analytic computation with a suitable kind of approximation. The use of the polynomial or trigonometric interpolation (see [10, 27]) is very convenient for this purpose.

13.8 Non-degeneracy condition for higher-order approximations

It is obvious from (9.7) and (10.8) that and, hence, the right-hand side of inequality (10.7) vanishes when m grows to +∞. On the other hand, it is easy to see that, under the conditions assumed, the mapping (uniformly on compact sets) converges to Φ as m tends to +∞. We thus arrive at the interesting observation that assumption (10.7) of Theorem 10.2, which is the main condition ensuring the non-degeneracy of the homotopy, has the form of the strict inequality

where approaches to while the term becomes arbitrarily small as m grows to +∞.

13.9 Relation to continuation theorems

Theorem 10.2 and similar statements can also be applied on the zeroth step of iteration, i.e., when one does not perform any iteration at all. This reminds us of the notion of a generating system appearing, e.g., in the asymptotic methods.

Indeed, having in mind Theorem 10.2 in its present formulation and recalling condition (10.12), let us put

for any . Recall that is a subset of which a priori contains the value for the periodic solution in question.

By using Theorem 10.2 for with condition (10.7) replaced by (10.12), we obtain the following statement on the solvability of the periodic problem (1.3), (1.4).

Corollary 13.1 Let assumption (6.20) hold and let the convergence condition (9.7) be satisfied. Furthermore, let there exist a closed domain such that

and

Then the periodic boundary value problem (1.3), (1.4) has at least one solution which has values in D and, moreover, is such that .

Recall that the vectors and are computed directly according to formula (2.1), whereas ‘’ means that, at every point from ∂ Ω, the strict inequality ‘>’ holds for at least one row, and the number of that row may vary with the point.

Assumptions of type (6.12), (13.5) are natural from various points of view. For example, let us imagine for a while that no interval halving has been carried out at all and thus, instead of Theorem 6.5, we are in the situation described by Proposition 3.1 with , and . The system of 2n determining equations (8.2) then turns back into the n-dimensional system (3.15),

the zeroth approximation of which, in the sense of the iteration process (3.4), has the form

Therefore, assumption (6.12) becomes

with a suitable domain , where

for . Then, using [[12], Lemma 3.26] with , one easily shows that the following statement holds.

Corollary 13.2 The conditions (13.7), and

are sufficient for the solvability of the periodic problem (1.3), (1.4).

Arguing in this manner, we can obtain, in particular, the well-known Mawhin’s theorem [28], with (13.7) being the solvability condition for the generating equation (of course, one could use the condition of a priori bounds type instead of (13.9) for a more exact resemblance). In this context, Corollary 13.1 can be regarded as a ‘halved’ analogue of the last mentioned statement, where the equations

determine the initial data of the zeroth approximation. The side-effect of halving is visible from the presence of two independent variables, ξ and η, due to which system (13.10), (13.11), in contrast to (13.6), contains n extra equations.

It should be noted that the convergence of the iteration scheme in Corollary 13.1 is guaranteed under the assumption , which is twice as weak as the corresponding condition of Corollary 13.2 ().

References

Rontó A, Rontó M: Periodic successive approximations and interval halving. Miskolc Math. Notes 2012, 13(2):459-482.

Nirenberg L: Topics in Nonlinear Functional Analysis. Courant Institute of Mathematical Sciences New York University, New York; 1974. (With a chapter by E. Zehnder, Notes by R. A. Artino, Lecture Notes, 1973-1974)

Gaines RE, Mawhin JL Lecture Notes in Mathematics 568. In Coincidence Degree, and Nonlinear Differential Equations. Springer, Berlin; 1977.

Cesari L Ergebnisse der Mathematik und Ihrer Grenzgebiete, N. F. 16. In Asymptotic Behavior and Stability Problems in Ordinary Differential Equations. 2nd edition. Academic Press, New York; 1963.

Hale JK: Oscillations in Nonlinear Systems. McGraw-Hill, New York; 1963.

Samoilenko AM: A numerical-analytic method for investigation of periodic systems of ordinary differential equations. I. Ukr. Math. J. 1965, 17(4):82-93. 10.1007/BF02526569

Samoilenko AM: A numerical-analytic method for investigation of periodic systems of ordinary differential equations. II. Ukr. Math. J. 1966, 18(2):50-59. 10.1007/BF02537778

Samoilenko AM: On a sequence of polynomials and the radius of convergence of its Abel-Poisson sum. Ukr. Math. J. 2003, 55(7):1119-1130. doi:10.1023/B:UKMA.0000010610.69570.13

Samoilenko AM, Ronto NI: Numerical-Analytic Methods of Investigating Periodic Solutions. Mir, Moscow; 1979. (With a foreword by Yu. A. Mitropolskii)

Samoilenko AM, Ronto NI: Numerical-Analytic Methods of Investigation of Boundary-Value Problems. Naukova Dumka, Kiev; 1986. (In Russian, with an English summary, edited and with a preface by Yu. A. Mitropolskii)

Samoilenko AM, Ronto NI: Numerical-Analytic Methods in the Theory of Boundary-Value Problems for Ordinary Differential Equations. Naukova Dumka, Kiev; 1992. (In Russian, edited and with a preface by Yu. A. Mitropolskii)

Rontó A, Rontó M: Successive approximation techniques in non-linear boundary value problems for ordinary differential equations. Handb. Differ. Equ. In Handbook of Differential Equations: Ordinary Differential Equations. Vol. IV. Elsevier/North-Holland, Amsterdam; 2008:441-592.

Rontó A, Rontó M: Successive approximation method for some linear boundary value problems for differential equations with a special type of argument deviation. Miskolc Math. Notes 2009, 10: 69-95.

Rontó A, Rontó M: On a Cauchy-Nicoletti type three-point boundary value problem for linear differential equations with argument deviations. Miskolc Math. Notes 2009, 10(2):173-205.

Rontó A, Rontó M: On nonseparated three-point boundary value problems for linear functional differential equations. Abstr. Appl. Anal. 2011., 2011: Article ID 326052. doi:10.1155/2011/326052

Ronto A, Rontó M: A note on the numerical-analytic method for nonlinear two-point boundary-value problems. Nonlinear Oscil. 2001, 4: 112-128.

Rontó A, Rontó M: On some symmetric properties of periodic solutions. Nonlinear Oscil. 2003, 6: 82-107. doi:10.1023/A:1024827821289 10.1023/A:1024827821289

Rontó M, Shchobak N: On the numerical-analytic investigation of parametrized problems with nonlinear boundary conditions. Nonlinear Oscil. 2003, 6(4):469-496. doi:10.1023/B:NONO.0000028586.11256.d7

Rontó M, Shchobak N: On parametrization for a non-linear boundary value problem with separated conditions. Electron. J. Qual. Theory Differ. Equ. 2007, 18: 1-16.

Ronto AN, Ronto M, Shchobak NM: On the parametrization of three-point nonlinear boundary value problems. Nonlinear Oscil. 2004, 7(3):384-402. 10.1007/s11072-005-0019-5

Ronto AN, Rontó M, Samoilenko AM, Trofimchuk SI: On periodic solutions of autonomous difference equations. Georgian Math. J. 2001, 8: 135-164.

Rontó M, Mészáros J: Some remarks on the convergence of the numerical-analytical method of successive approximations. Ukr. Math. J. 1996, 48: 101-107. doi:10.1007/BF02390987 10.1007/BF02390987

Rontó M, Samoilenko AM: Numerical-Analytic Methods in the Theory of Boundary-Value Problems. World Scientific, River Edge; 2000. (With a preface by Yu. A. Mitropolsky and an appendix by the authors and S. I. Trofimchuk)

Rontó A, Rontó M: Existence results for three-point boundary value problems for systems of linear functional differential equations. Carpath. J. Math. 2012, 28: 163-182.

Kwapisz M: On modifications of the integral equation of Samoilenko’s numerical-analytic method of solving boundary value problems. Math. Nachr. 1992, 157: 125-135.

Kwapisz M: On modification of Samoilenko’s numerical-analytic method of solving boundary value problems for difference equations. Appl. Math. 1993, 38(2):133-144.

Rontó A, Rontó M, Holubová G, Nečesal P: Numerical-analytic technique for investigation of solutions of some nonlinear equations with Dirichlet conditions. Bound. Value Probl. 2011., 2011: Article ID 58. doi:10.1186/1687-2770-2011-58

Mawhin J CBMS Regional Conference Series in Mathematics 40. In Topological Degree Methods in Nonlinear Boundary Value Problems. Am. Math. Soc., Providence; 1979. (Expository lectures from the CBMS Regional Conference held at Harvey Mudd College, Claremont, Calif., June 9-15, 1977)

Acknowledgements

Dedicated to Professor Jean Mawhin on the occasion of his 70th birthday.

The work supported in part by RVO: 67985840 (A. Rontó). This research was carried out as part of the TAMOP-4.2.1.B-10/2/KONV-2010-0001 project with support from the European Union, co-financed by the European Social Fund (M. Rontó).

Author information

Authors and Affiliations

Corresponding author

Additional information

Competing interests

The authors declare that they have no competing interests.

Authors’ contributions

The initial draft was prepared mainly by the first two authors, while the third one carried out the numerical computations and an overall check of estimates. All the authors contributed equally to the final version of this work and approved its present form.

Authors’ original submitted files for images

Below are the links to the authors’ original submitted files for images.

Rights and permissions

Open Access This article is distributed under the terms of the Creative Commons Attribution 2.0 International License (https://creativecommons.org/licenses/by/2.0), which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

About this article

Cite this article

Rontó, A., Rontó, M. & Shchobak, N. Constructive analysis of periodic solutions with interval halving. Bound Value Probl 2013, 57 (2013). https://doi.org/10.1186/1687-2770-2013-57

Received:

Accepted:

Published:

DOI: https://doi.org/10.1186/1687-2770-2013-57